A Review of Nonlinear Time Series Models

Ran Wang

Contents

1 Parametric Model

TAR

STAR

Markov Switch State Space Model

2 Nonparametric model

- Nonparametric Kernel Estimation

TAR: Threshold Autoregressive

Assume time series model has different parameters in different thresholds.

Model Specification

\[ y_t=\sum_{i=1}^{p}\phi_i^{j}y_{t-p}+\sigma^j\epsilon_t \] \[ if~r_{j-1} < z_t < r_j \]

where \( \phi_i^{j} \) and \( \sigma^j \) are parameters for thershold \( j \), \( z_t \) is the threshold variable.

SETAR (Self-Exciting Threshold Autoregressive)

Let threshold variable \( z_t=y_{t-k} \) we can get SETAR(k) model.

Model Specification

\[ y_t=\sum_{i=1}^{p}\phi_i^{j}y_{t-p}+\sigma^j\epsilon_t \] \[ if~r_{j-1} < y_{t-k} < r_j \]

where \( \phi_i^{j} \) and \( \sigma^j \) are parameters for thershold \( j \), \( z_t \) is the threshold variable.

SETAR (Self-Exciting Threshold Autoregressive)

Model Estimation: Tsay's Approach

Choose \( d \)

- 1 To given \( d \) and \( p \), set \( t=max(d,p)+1,..,n \) and set \( Y_d=(y_h,...,y_{n-d}) \), where \( h=max(1,p-d+1) \)

SETAR (Self-Exciting Threshold Autoregressive)

Model Estimation: Tsay's Approach

- 2 Run recursive least square estmation on:

\[ y_{\pi_i}=\sum_{j=1}^{p}\phi_iy_{\pi_i-j}+\sigma\epsilon_{\pi_i} \]

where \( y_{\pi_i} \) is the i-th smallest \( y \) in \( Y_d \)

(e.g.:\( \pi_1 \)=2+d, if the \( y_2 \) is the smallest value in \( Y_d \))

SETAR (Self-Exciting Threshold Autoregressive)

Model Estimation: Tsay's Approach

- 3 Based on residuals \( \hat{e}_{\pi_i} \), run another regression:

\[ \hat{e}_{\pi_i}=\sum_{j=1}^{p}\alpha_iy_{\pi_i-j}+u_{\pi_i} \]

to get F-test statistics.

- 4 Choose best d, such that

\[ d=argmax_{v\in S} F(p,v) \]

where \( S \) is a set of values of \( d \).

SETAR (Self-Exciting Threshold Autoregressive)

Model Estimation: Tsay's Approach

Find \( r_j \)

Graphical Tools: the scatter plot of the t-stat of the recursive LS of \( \phi \) from step 3 above.

SETAR (Self-Exciting Threshold Autoregressive)

Model Estimation: Tsay's Approach

SETAR (Self-Exciting Threshold Autoregressive)

Model Estimation: Tsay's Approach

Estimate Parameters \( \phi^j \), \( \sigma^j \)

Based on \( d \), \( p \) and \( r_j \), estimate \( \phi^j \) on spliting sample sets using OLS.

\[ y_t=\sum_{i=1}^{p}\hat{\phi}_i^{j}y_{t-p}+\hat{\sigma}^j\epsilon_t \]

SETAR (Self-Exciting Threshold Autoregressive)

Forecasting Performance:

Like forecasting in VAR, forecasting from SETAR models can be easily computed using Monte Carlo simulations.

Practically, SETAR is simple but enough to handle the nonlinearity in many time series data.

STAR (Smooth Transition Autoregressive)

Unlike TAR model, STAR model assumes that the regime switch happens in a smooth fashion, not in a abrupt or discontinuous way.

Model Specification:

Suppose there are two different regimes \( 1,2 \):

\[ y_t=\sum_{i=1}^{p}\phi_i^1y_{t-i}(1-G(z_t))+\sum_{i=1}^{p}\phi_i^2y_{t-i}G(z_t)+\epsilon_t \]

where \( G(z_t) \) is smooth transition function \( 0 < G(z_t) < 1 \). \( z_t \) is the transition variable.

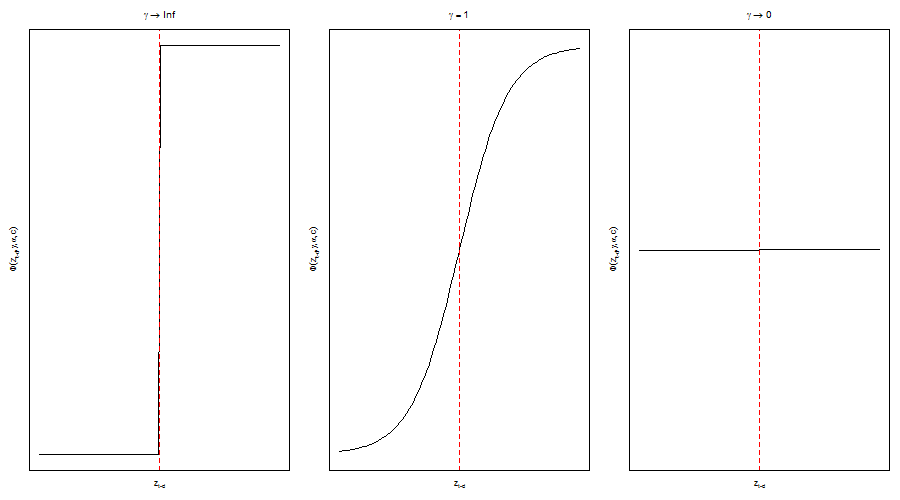

STAR (Smooth Transition Autoregressive)

- 1 Logistic STAR:

\[ G(z_t)=\frac{1}{1+e^{-\gamma(z_t-c)}} \]

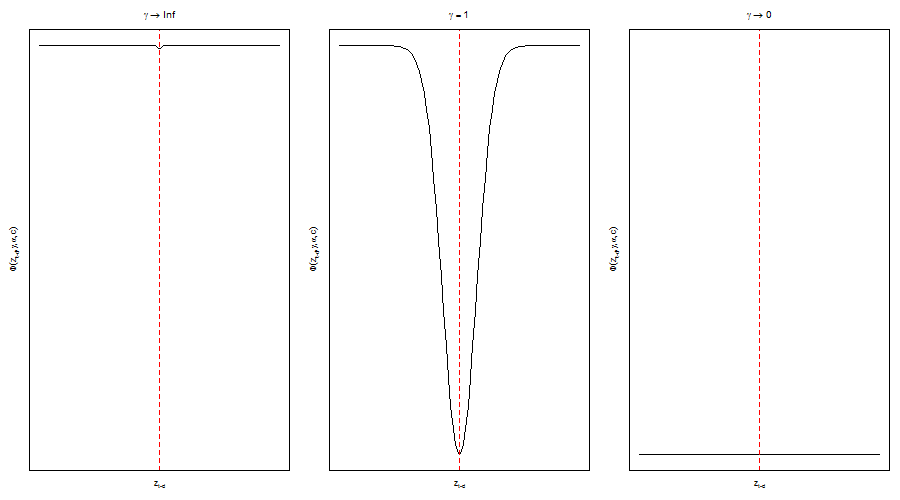

STAR (Smooth Transition Autoregressive)

- 2 Exponential STAR:

\[ G(z_t)=1-e^{-\gamma(z_t-c)^2} \]

STAR (Smooth Transition Autoregressive)

Model Estimation:

Using nonlinear least squares

\[ \hat{\Theta}=argmin_{\gamma,c}\sum_{t}\hat{\epsilon}_t^2 \]

where

\[ \epsilon_t=y_t-\sum_{i=1}^{p}\phi_i^1y_{t-i}(1-G(z_t|\gamma,c))-\sum_{i=1}^{p}\phi_i^2y_{t-i}G(z_t|\gamma,c) \]

STAR (Smooth Transition Autoregressive)

Forecasting Performance:

Forecasting from STAR models can be computed using Monte Carlo simulations. STAR can give better performance than TAR when the smooth transition assumption is correct.

Markov Switching State Space Models

Model's parameters depend on hidden state, which switch between different states following a markov chain.

Model Specification:

Given the basic state space model:

\[ \alpha_{t+1}=d_t+T_{t}\alpha_t+H_{t}\eta_{t} \] \[ y_t=c_t+Z_t\alpha_t+G_t\varepsilon_t \]

Let \( t=S_t \) for all the parameters, we get markov switching state space models.

\[ \alpha_{t+1}=d_{S_t}+T_{s_t}\alpha_t+H_{s_t}\eta_{t} \] \[ y_t=c_{S_t}+Z_{S_t}\alpha_t+G_{S_t}\varepsilon_t \]

Markov Switching State Space Models

Estimation: Kalman filtering algorithm

The updating equations are:

\[ a_{t|t}=a_{t|t-1}+K_tv_t \] \[ P_{t|t}=P_{t|t-1}-P_{t|t-1}Z'_t(K_t)' \]

where

\[ v_{t}=y_t-c_t-Z_ta_{t|t-1}(error) \] \[ F_t=Z_tP_{t|t-1}Z_t'+G_tG_t'(error~cov~matrix) \] \[ K_t=P_{t_t-1}Z_t'(F_t)^{-1}(gain~matirx) \]

Markov Switching State Space Models

Estimation: Kalman filtering algorithm

By normality assumption in error term \( \eta_{t},\varepsilon_t \), we can use MLE to estimate the unkown parameters:

\[ LogL(\Theta)=\frac{Tk}{2}\ln 2\pi-\frac{1}{2}\sum_{t=1}^{T}\ln|F_t|-\frac{1}{2}\sum_{t=1}^{T}v_t'F_{t}^{-1}v_t \]

Markov Switching State Space Models

Estimation: Kalman filtering algorithm

The new updating equations are:

\[ a_{t|t}^{i,j}=a_{t|t-1}^{i,j}+K_t^{i,j}v_t^{i,j} \] \[ P^{i,j}_{t|t}=P_{t|t-1}^{i,j}-P_{t|t-1}^{i,j}Z'_t(K^{i,j}_t)' \]

where

\[ v_{t}^{i,j}=y_t-c_t-Z_ta_{t|t-1}^{i,j}(error) \] \[ F_t^{i,j}=Z_tP_{t|t-1}^{i,j}Z_t'+G_tG_t'(error~cov~matrix) \] \[ K_t^{i,j}=P_{t_t-1}^{i,j}Z_t'(F_t^{i,j})^{-1}(gain~matirx) \]

But it is computationally infeasible because there are many statistics need to be calculated and stored.

Markov Switching State Space Models

Estimation: Kim's filtering algorithm

The updating equations are:

\[ a_{t|t}^j=\frac{\sum_{i=1}^{k}P(S_t=j,S_{t-1}=i|I_t)a_{t|t}^{i,j}}{P(S_t=j|I_t)} \] \[ P_{t|t}^j=\frac{\sum_{i=1}^{k}P(S_t=j,S_{t-1}=i|I_t)[P_{t|t}^{i,j}+(a_{t|t}^j-a_{t|t}^{i,j})(a_{t|t}^j-a_{t|t}^{i,j})']}{P(S_t=j|I_t)} \]

where

\[ P(S_t=j|I_t)=\sum_{i=1}^{k}P_{ij}\frac{f(y_{t-1}|I_{t-2},S_t=i)P(S_{t-1}=i|I_{t-2})}{\sum_{m=1}^{k}f(y_{t-1}|I_{t-2},S_{t-1}=m)P(S_{t-1}|I_{t-2})} \]

Markov Switching State Space Models

Forecasting Performance

Since Markov Switching State Space Model often catch some important properties of macroeconomic or financial market data, it can give a better economic forecasting than other models

Nonparametric Kernel Estimation

Model Specification

Suppose the true nonlinear formula of time series is:

\[ y_t=f(y_{t-1},...,y_{t-p})+\epsilon_t \]

The nonparametric kernel estimator is:

\[ f(y_1,...,y_p)=\frac{\sum_{t=p+1}^{n}\prod_{i=1}^{p}K(\frac{y_i-Y_{t-i}}{h_i})Y_t}{\sum_{t=p+1}^{n}\prod_{i=1}^{p}K(\frac{y_i-Y_{t-i}}{h_i})} \]

Nonparametric Kernel Estimation

Model Estimation: Choosing \( h \)

Cross Validation:

1 Split the whole sample into m parts,(5 folds, 10 folds or leave one out CV) with same amount of data points.

2 Choose one h arbitrarly. Use m-1 parts to do kernel estimation, use one part to test the MSE. Do the same thing in each of n parts of data points.

3 Redo step 2 several times to different h. Choose the best h for minimizing the MSE.

Nonparametric Kernel Estimation

Forecasting Performance

Precise forecasting at “in-sample” forecasting (new sample point locating between the min and max values in old sample set).